Human-in-the-Loop: Why Creative Teams Need Responsible AI Too

Insurance companies don’t deploy AI without guardrails. They think about risk, compliance, oversight, documentation, and accountability.

Designing for Roots, I’ve learned that AI without structure isn’t innovation — it’s exposure. In insurance, a model can’t just “work.” It must be explainable, documented, monitored, and reviewed by humans. There is always oversight.

Creative teams should apply the same discipline.

Responsible AI, in simple terms, means using AI in a way that is transparent, accountable, safe, and aligned with real-world standards. It means humans remain in control. It means decisions can be explained. It means risks are identified before they scale. And it means someone owns the outcome.

When we use AI to generate visuals at scale, we are also scaling risk.

Brand risk.

Copyright risk.

Accuracy risk.

Reputational risk.

And just like in insurance, the final responsibility always sits with a human.

Not the tool. Not the model. Not the prompt. A person.

Visuals at Scale:

The Opportunity and the Risk

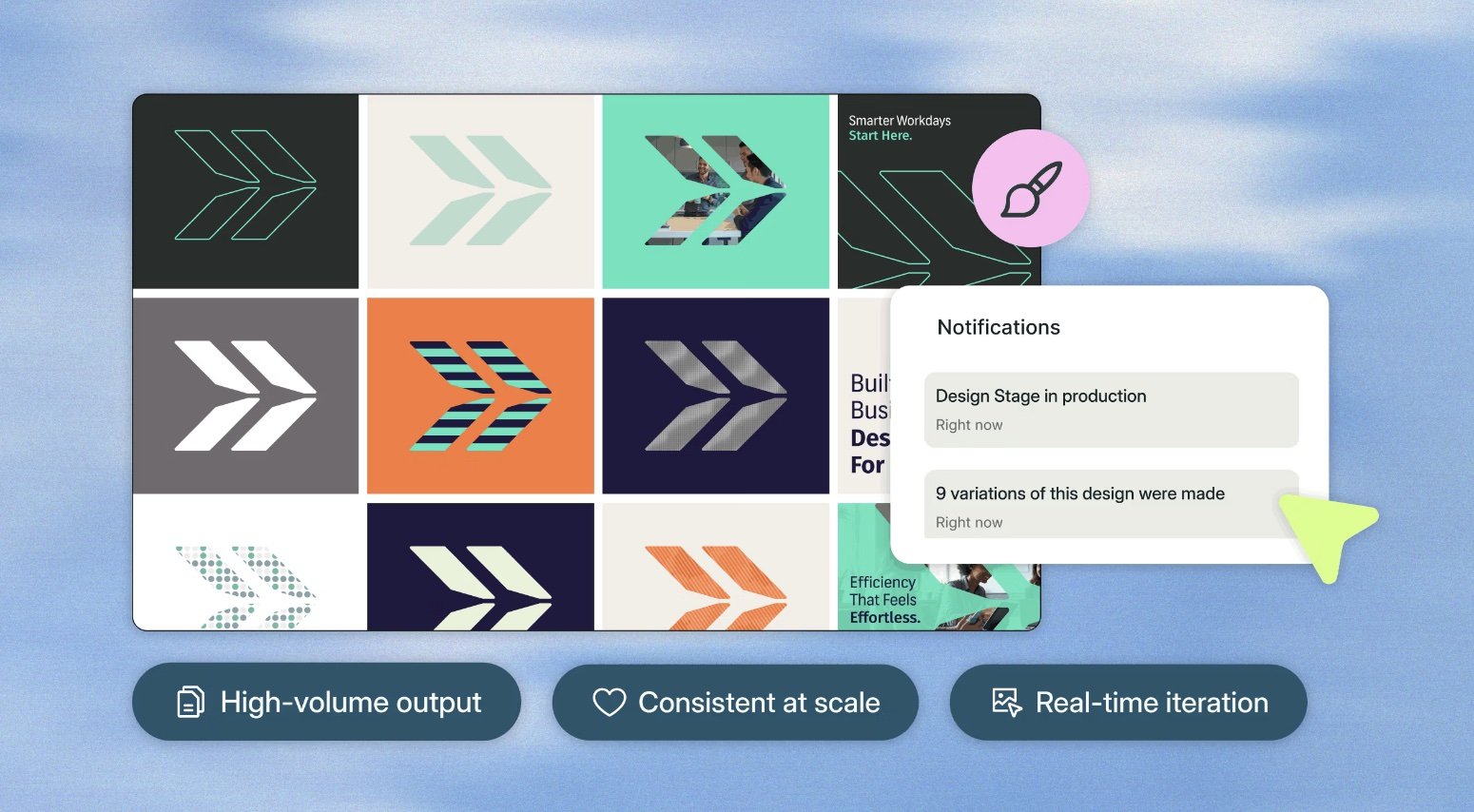

AI allows creative teams to generate hundreds of visual directions in minutes.

Campaign variants.

Audience-specific imagery.

Localized adaptations.

Concept explorations.

The scale is powerful.

But scale amplifies everything — including mistakes.

Subtle brand drift becomes visible inconsistency.

Weak concepts multiply into noise.

Copyright ambiguity spreads across channels.

Mediocre ideas feel impressive because there are many of them.

The danger isn’t bad visuals. The danger is unreviewed volume.

Superside shows how enterprises can scale creative output without sacrificing quality. Use their frameworks internally, then extend your capacity by partnering externally to expand your team intelligently. I’m a big fun of their business model and AI innovations to support it.

1. Brand Alignment: Volume Cannot Replace Intent

When visuals are created at scale, brand discipline becomes more important — not less.

AI can remix aesthetics endlessly. But brands are not aesthetic experiments. They are systems of meaning.

At Roots, working in insurance, clarity matters. Trust matters. Precision matters. You can’t afford visuals that feel trendy but vague. You can’t afford imagery that implies something the product doesn’t deliver.

Human-in-the-loop creative means:

Start with strategic clarity before generating.

Define visual principles, not just mood boards.

Approve the system behind the visuals, not just a few outputs.

Review outputs against brand intent, not just visual appeal.

A strong visual isn’t the one that looks “cool.”

It’s the one that reinforces positioning.

When creating at scale, humans must protect coherence.

2. Copyright & Ownership: Just Because It’s Generated Doesn’t Mean It’s Safe

Generative tools are powerful, but they operate in a legal landscape that is still evolving. When teams generate visuals casually — especially with stylistic prompts — they introduce uncertainty.

Responsible AI in creative means:

Avoiding prompts that mimic living artists or recognizable styles.

Reviewing visuals for resemblance to known brands, characters, packaging, or trade dress.

Understanding what model or dataset is being used.

Using enterprise-grade tools when risk matters.

For example, Shutterstock Enterprise AI includes licensed training data, IP indemnification, and human review safeguards. That combination — licensed inputs plus human oversight — significantly reduces exposure when generating visuals at scale. Check out this post copyrights for GenAI for creatives.

The principle is simple:

If you can’t confidently explain where the visual came from and why it’s safe, it shouldn’t ship.

3. Scale Should Increase Quality — Not Lower It

AI makes it easy to mistake quantity for creativity.

Fifty directions does not equal one strong idea.

Responsible AI means slowing down at the right moments:

Download the assets.

Step away from the interface.

Print a few out.

Put them on the wall.

React to them like it’s 2015. (Before AI)

Does the concept hold up?

Is the idea clear?

Is it emotionally intentional?

Would you defend this in a room full of stakeholders without saying, “the model suggested it”?

Screens compress judgment. Speed creates false confidence.

Distance restores clarity.

When generating visuals at scale, humans must filter with discernment.

4. Mentoring Designers in the AI Era

There’s another risk that doesn’t get discussed enough.

Junior designers entering the industry today may never experience design without AI assistance.

That’s not inherently bad. But without mentorship, it can weaken foundational thinking.

Design is not prompt writing.

It’s:

Framing the problem.

Defining the audience.

Clarifying the message.

Working within constraints.

Iterating intentionally.

Defending decisions.

Responsible AI in creative leadership means teaching designers to think before they generate.

Ask:

What problem are we solving?

What belief are we shifting?

What does this visual communicate?

Why this direction — not another?

AI should enhance creative intelligence.

It should not replace creative judgment.

If we scale visuals without scaling thinking, we lose the craft.

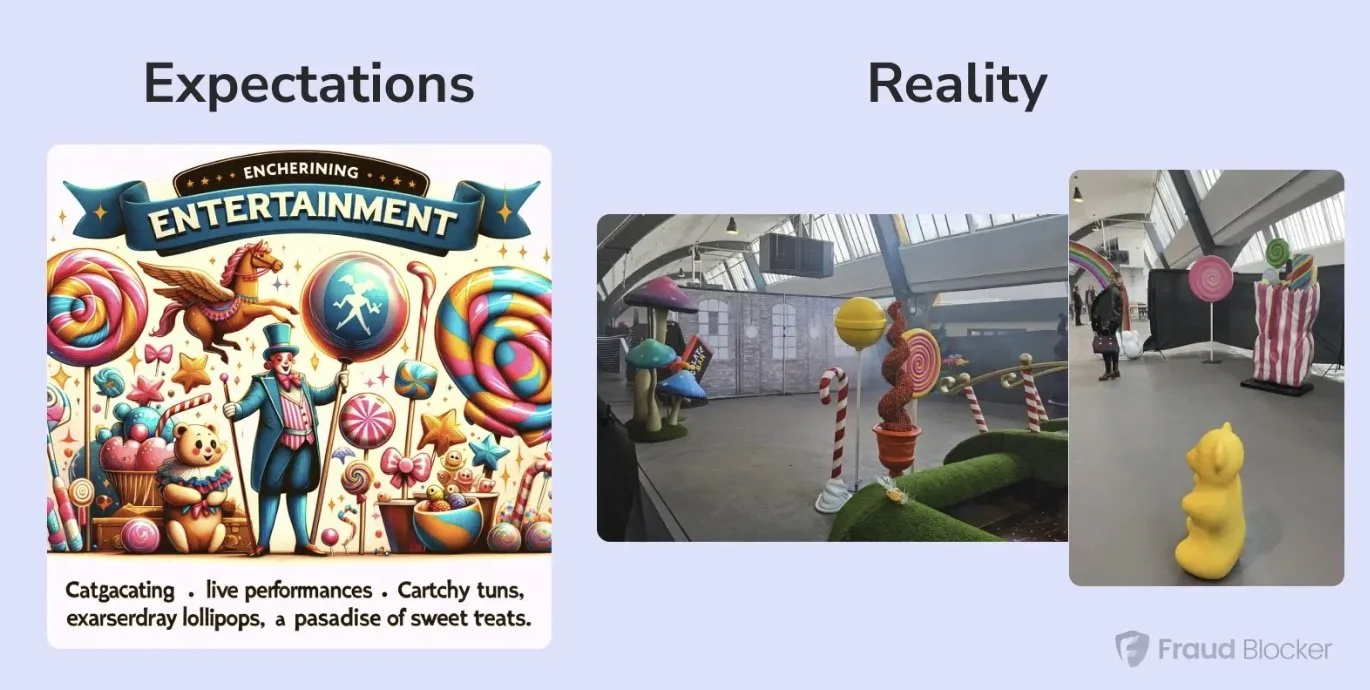

AI can amplify anything, including the wrong message. When it oversells weak products, it damages trust. When it exaggerates strong brands, it creates a gap between promise and reality. That’s why I agree with Fraud Blocker on brand transparency. AI should scale truth, not hype.

The Real Role of Humans in Creative AI

AI can generate.

Only humans can decide what deserves to exist.

Insurance companies understand that automation without governance creates liability. Creative teams should understand that automation without judgment creates dilution.

Human-in-the-loop doesn’t slow innovation.

It protects brand equity.

It protects originality.

It protects intellectual property.

It protects long-term value.